I’m fairly skeptical about GenAI. I’ve managed to stay away from all the copilots so far and try to avoid their output like the plague. Text it generates feels soulless and boring and generated code features non-existent APIs. The term slop has been used to describe AI generated content, which I consider fitting.

I think that’s enough of a rant to show you I’m not here to push yet another AI hype topic. I try to find things it enables me to do that I couldn’t do (or didn’t want to) previously. One such area is improving accessibility. I have a couple of blogs in my RSS reader which cover that topic. Recently, Marcus Herrmann wrote a short post about 80/20 accessibility. On his list of quick wins, he features alt text attributes for images.

This attribute helps people who use screen readers understand what an image displays. It makes content more accessible, and unfortunately, not all of the pictures in our posts have the HTML alt attribute set. I think this is the kind of accessibility issue that GenAI may be able to help us with because I don’t feel like going through hundreds of posts, finding the images, and setting this attribute manually.

If you’re very impatient, the code for this post is available on Github, go check it out.

Let’s talk about how we’re going to do this. As with any great plan, this one features three steps.

- Discovery: Parse Markdown articles and extract image links

- Inference: Use a foundation model to describe the image in the context of the article

- Update the Markdown with the new image descriptions

Wait a minute - why Markdown? Our blog is generated from Markdown files to separate styling from content (more or less), and an alternative description of an image needs to be added to the Markdown documents. Specifically, we need to update the image links, which look like this:

The descriptive text is stored as the alt attribute on the HTML img element, and the path to the image describes the relative path to the image or an absolute image URL. In our case, the static site generator Hugo parses these Markdown files and assets (images, etc.) and converts them into static HTML pages. I want my solution to be independent of Hugo, though, because Markdown is also used in other places, such as Readme files. For now, I’ve also decided to ignore external images in the form of URLs, i.e., http:// or https:// links. Mostly because we’re not using them.

Another consideration is cost. I want to be able to periodically re-run this solution to update new articles without calling the foundation model for all previously seen images, as this costs money. This means we need some kind of state to keep track of what has already been processed. State almost always makes things more complicated, and that’s also true here.

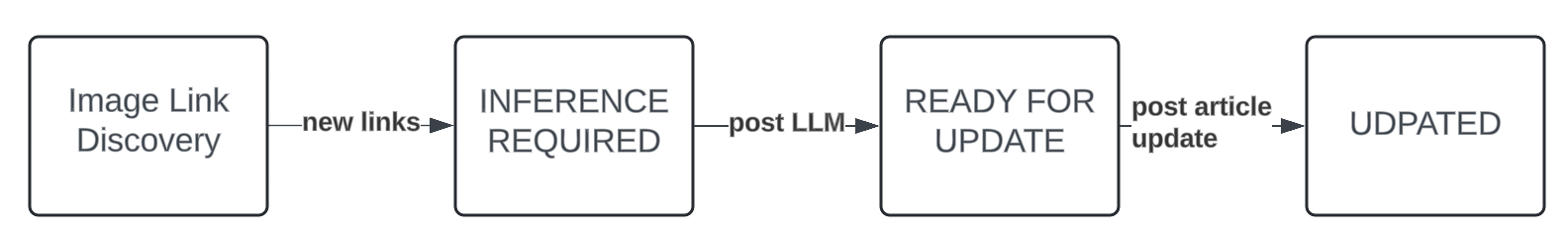

I tried to keep it as simple as possible, so I added a minimal metadata storage mechanism. The idea is that new links get discovered. If they’re already in the metadata store, we skip them. Otherwise, we add them with the status INFERENCE_REQUIRED. Next, we run the script to generate our alt texts, which are also stored in the metadata storage, and set the status to READY_FOR_UPDATE. The final step includes reading all READY_FOR_UPDATE items, updating the underlying markdown documents, and setting their status to UPDATED.

This makes manual intervention possible by editing the metadata on disk as it’s just a JSON document. We can check it into version control as well so that all contributors have the same data, but that’s optional.

Going through all of the code would be a bit much, so we’ll focus on some (in my opinion) interesting parts here. If you want to dive deeper, go check out the code on Github. The image link discovery is handled by the discover-image-links utility and pretty straightforward. I’m using a regular expression with named capture groups (that just allows me to access the data more easily) to find all the image links that match the pattern outlined above.

import re

IMAGE_LINKS_PATTERN = re.compile(

r"(?P<full_match>!\[(?P<alt_text>.*)\]\((?P<path>.*)\))"

)

LOGGER = logging.getLogger(__name__)

def extract_image_links_from_markdown_doc(

doc: str,

) -> list[ImageLink]:

links = []

for match in IMAGE_LINKS_PATTERN.finditer(doc):

captured_patterns = match.groupdict()

full_match = captured_patterns["full_match"]

alt_text = (

captured_patterns["alt_text"]

if captured_patterns["alt_text"] != ""

else None

)

path = captured_patterns["path"]

links.append(ImageLink(full_match, alt_text, path))

return links

I could have used a “proper” Markdown parser for this, but I wanted to keep it light and I’m fine with this not covering all edge cases such as Markdown in Code Blocks. Actually finding the Markdown files in a directory is super convenient in Python thanks to pathlib from the standard library and its glob pattern support.

article_base_bath: pathlib.Path = args.markdown_base_dir

markdown_docs = article_base_bath.glob("**/*.md", case_sensitive=False)

for path in markdown_docs:

# Do something

Resolving the relative parts from the image links, e.g., img/2024/08/dog.png to the actual physical path was slightly more involved, but I just used a brute force method and tried a few paths relative to the article file itself, the current working directory and also supported adding additional asset base directories through the --asset-base-dir parameter.

Once we’ve discovered all the images, it’s time to make an API call to Amazon Bedrock and use an LLM to describe it. This is handled by the generate-alt-texts utility.

import boto3

def get_alt_text_for_image(

article_content: str, image_link_record: ImageLinkRecord

) -> str:

client = boto3.client("bedrock-runtime")

# Extract 1000 chars surrounding the image link

article_content_short = #...

prompt = f"""

Summarize the following image into a single sentence and keep your summary as brief as possible.

You can use this text between <start> and <end> as context, the image is refered to as {image_link_record['original_link_md']}

<start>

{article_content_short}

<end>

Exclusively output the content for an HTML Image alt-attribute, one line only, no code, no introduction, only the text, focus on accessibility.

"""

# Read the image file

with open(image_link_record["abs_img_path"], "rb") as image_file:

image_data = image_file.read()

payload = {

"modelId": "anthropic.claude-3-haiku-20240307-v1:0",

"input": {

"anthropic_version": "bedrock-2023-05-31",

"max_tokens": 512,

"messages": [

{

"role": "user",

"content": [

{"type": "text", "text": prompt},

{

"type": "image",

"source": {"type": "base64", "media_type": "image/jpeg",

"data": base64.b64encode(image_data).decode("utf-8"),

},

},

],

}

],

},

}

response = client.invoke_model(

modelId=payload["modelId"], body=json.dumps(payload["input"])

)

response_body = response["body"].read()

result = json.loads(response_body.decode("utf-8"))

alt_tag = result.get("content", [{}])[0].get("text")

return alt_tag

This function prompts Anthropics Claude 3 Haiku to describe the image with a focus on both a short description and accessibility. As additional context, we’re giving it a part of the Markdown document that surrounds the image link, which sometimes helps to improve the description. Special thanks to my colleague Franck Awounang Nekdem who helped me optimize the prompt so we got some decent results.

Fun fact, I tried generating this part with Microsoft Copilot, because I didn’t feel like diving into the JSON interface of Claude, which proceeded to invent APIs and formats that don’t exist and Amazon Q, which refused to help me at all. I can’t seem to stop complaining about GenAI, back to the topic.

The last part is just about updating the Markdown documents with the new alt tag texts. This is handled through the update-alt-texts utility, which will update the content in-place by default, so I highly recommend you do this in the context of a git repo or similar version control mechanism. This is just search and replace, nothing interesting going on there. Let’s see how this works in practice.

I’ve decided not to start with the tecRacer blog, because there’s a lot of content and I want another pair of eyes to look over this first. Instead, I’ve tested this on the repository of my personal blog.

# Step 1: Discover image links

$ discover-image-links --asset-base-dir static content

# Step 2: Generate alt texts

$ generate-alt-texts

# Step 3: Update Markdown

$ update-image-links

The whole procedure didn’t take more than a couple of minutes and I’ve manually checked the before and after and compared that to the original image and I’m pretty happy with the result. After seeing how it treated the missing alt-texts I actually decided to regenerate all alt texts in my articles, which can be done by replacing the first command with this one.

# Step 1: Discover image links, even if they have an alt text

# --ue or --update-existing

$ discover-image-links --asset-base-dir static content --ue

Beware, that this will take more time and cost more money. I’ve noticed that there are very few descriptions that are a bit wonky but still leagues ahead of my usual three-word alt-texts, so I’ve just edited them manually. It wouldn’t be GenAI if you didn’t need to do some of the work yourself in the end.

Go check out the code on Github, and improve the accessibility of your website.

— Maurice

Photo by Daniel Ali on Unsplash